Adobe Digital Insights reported a 4,700% year-over-year jump in generative AI traffic to U.S. retail sites as of mid-2025.

But the problem is…

Most eCommerce brands are completely invisible in LLM citations and have no idea.

When someone asks the AI search tools a query related to your brand, the AI picks it through well-structured pages, trusted domains, and content it can actually extract a clean answer from. If your site doesn’t check those boxes, you don’t get the click.

And that click matters more than most people realize. Visits referred by ChatGPT convert at 11.4%, compared to just 5.3% from organic search (Similarweb).

This goes beyond eCommerce AI SEO tactics. If you’re still relying on traditional playbooks, it’s worth understanding how to rank in AI search because that’s where buying decisions begin now.

This blog covers what LLM citations are, what it takes to earn them, and how to track your AI visibility across ChatGPT, Perplexity, and Google AI Overviews.

Key Takeaways

- LLM citations drive higher-converting traffic than traditional search results.

- RAG powers most citations, not training data. Content freshness is everything.

- One query creates multiple citation opportunities through query fan-out.

- Topical authority, clean formatting, and original data earn you consistent citations.

- Each AI platform picks sources differently. One strategy won’t cover all of them.

What are LLM Citations?

Whenever you ask an AI assistant a question, and it references a specific source, whether by naming it or linking to it, that reference is called a citation. Think of it as the AI’s way of giving credit: “This is where the information came from.”

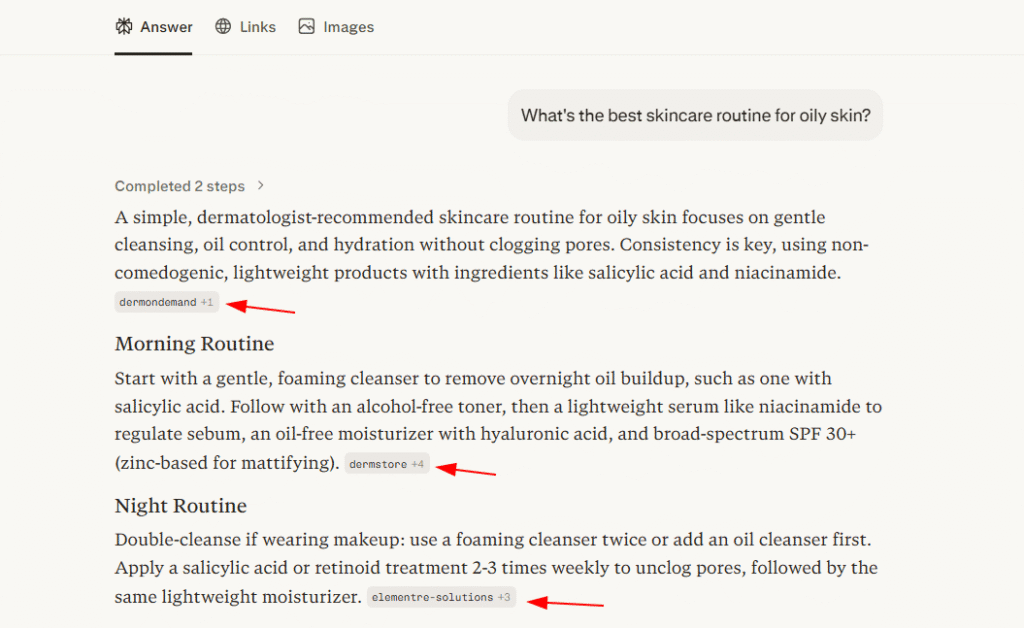

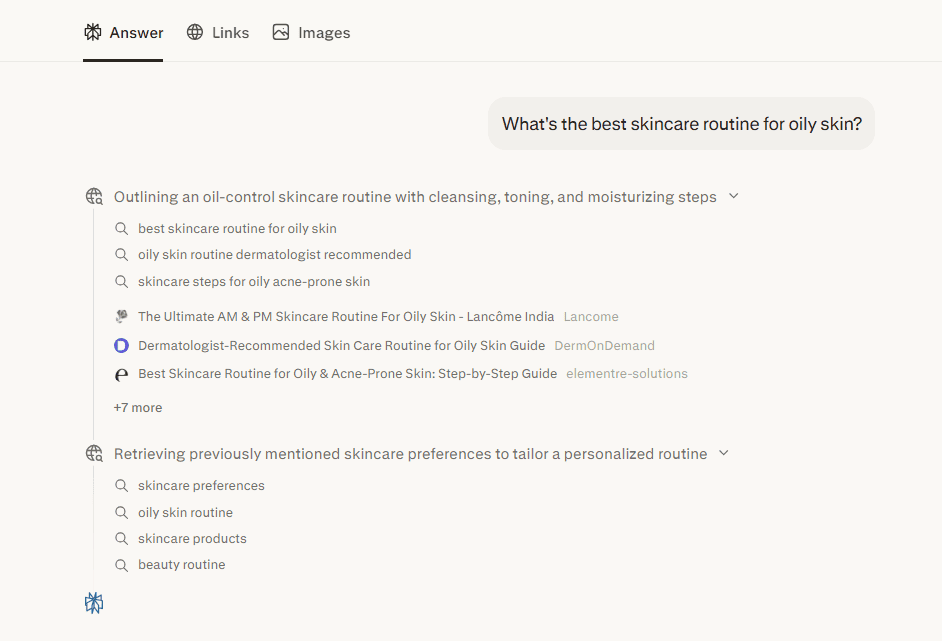

Here’s a simple way to see this in action. I asked a basic query like “What’s the best skincare routine for oily skin?” and saw how it responds.

AI mentions work a bit differently. They’re direct references to a brand or entity inside a response, but without any hyperlink. No clickable link, just a name drop. They still do meaningful work for brand awareness and visibility, just not in the same way a citation does.

To illustrate the contrast, I asked ChatGPT the same question: “What’s the best skincare routine for oily skin?” The tool delivered a solid answer and named its source, but there was nothing to click through to.

That’s the core difference worth paying attention to. Extracted citation sends traffic directly to a source and builds its authority over time. A mention builds familiarity and brand recognition, but stops short of a referral.

It looks like a minor distinction on the surface. In practice, it has a real impact on how your brand grows through AI search.

How LLM Citations Actually Work

To get cited by AI systems, you first need to understand the mechanics behind how LLMs work and how they retrieve and use information. There are two distinct processes involved, and knowing the difference changes how you approach optimization.

AI Models Store and Retrieve Information

| Feature | Training Data | Retrieval Augmented Generation (Standard) | Agentic RAG (2026) |

| Memory Type | Permanent / Long-term | Live / Short-term | Multi-step / Iterative |

| Knowledge Cutoff | Static (months or years old) | Real-time web access | Real-time + deep verification |

| Update Speed | Very slow (requires retraining) | Instantaneous | Instantaneous and adaptive |

| Primary Goal | Foundational logic and broad facts | Providing current citations | Verifying claims and “stress-testing” |

| Search Method | None (internal weights only) | Single-pass search | Multi-source, autonomous research |

| Reliability | High for general concepts | High for recent events | Highest (cross-references data) |

Live Web Search in AI Tools

Not every query kicks off a live web search. But certain types of questions almost always do.

When someone asks about something that changes fast, like recent news or a newly launched product, the AI doesn’t rely on what it memorized during training. It goes out and finds current information. The same happens with health, finance, legal, and safety topics, where being wrong carries real consequences. The model looks for a citable, verifiable source.

Requests for data and statistics follow the same pattern. Ask for a specific number, and the AI typically pulls it from a live source rather than guessing. Niche or underrepresented topics behave similarly. If a subject wasn’t covered well in training data, the model searches outward by default because it simply doesn’t have enough to work with internally.

If your content sits in any of these categories, you’re already in a solid position to earn LLM citations.

Here’s a quick example. I ran a health-related query on Google, and the Google AI Overviews result came back with clear citations attached so I could verify the source directly.

One Query Creates Multiple Citation Opportunities

Most people assume AI search tools process a query the way a human would read it. That’s not quite what’s happening under the hood. When someone types in a question, AI tools typically split it into several smaller sub-queries running in parallel behind the scenes. This is called query fan-out, and it’s still one of the most overlooked ideas in AI search visibility strategy.

Take a query like “What’s the best skincare routine for oily skin?” The AI isn’t just processing that single question. It’s likely generating sub-queries such as “best skincare products for oily skin 2026,” “affordable skincare routine for oily skin,” and “comparison of CeraVe vs The Ordinary vs Neutrogena for oily skin” all at the same time.

Every one of those sub-queries is its own citation opportunity. And most of them go unclaimed.

This should shift how you think about structured data. If your page answers the main question but ignores everything that branches off it, you’re walking away from the bulk of those opportunities. Build content around complete topics rather than a single primary keyword. That approach gives you a much better shot at appearing across the full fan-out.

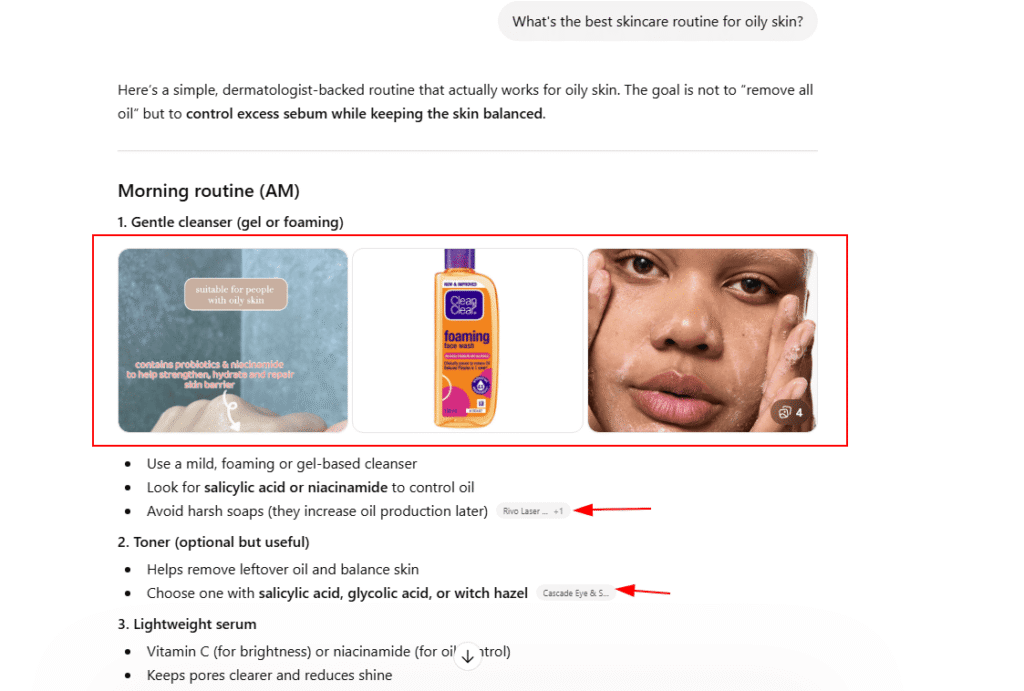

Here’s a simple example. I entered the query “What’s the best skincare routine for oily skin?”

Notice the Thinking dropdown?

One question, and the AI immediately expanded it into six distinct targeted searches to find the most accurate and well-structured final answer. To earn citations from AI models, your content needs to be the strongest match for those specific sub-queries.

What AI Search Tools Look for When Choosing Sources

These are the real ranking factors driving LLM citations. Once you nail these, the rest of your strategy gets a lot simpler.

1. Recency

AI tools are heavily biased toward recency. Newer AI models are being trained to detect manipulation, so when a model spots a 2026 publication date, but the underlying facts line up with what it learned from 2021 training data, it registers that as a conflict.

The actual factual claims in your content, what researchers refer to as “Subject-Relation-Object” triplets, need to reflect real, meaningful updates rather than a new timestamp slapped on old material. Content that hasn’t changed in any substantive way is essentially invisible to these systems.

And no, updating the year in your headline won’t fix it. AI models are capable of recognizing when a so-called refresh is purely surface-level. Changing “2025” to “2026” while leaving everything else untouched doesn’t signal fresh content to these systems.

What actually works is substantive change: updated statistics, revised language, and added context that reflects how the topic has evolved. High-traffic pages deserve a proper review at least every quarter, and anything that relies on data needs attention even more often than that.

2. Domain Authority

High-authority domains, particularly those with a DR of 80 or higher, are still getting cited by LLMs on a consistent basis. For AI systems, these domains represent a low-risk default. That said, the more significant development heading into 2026 is how topical authority is starting to carry real weight.

A niche site dedicated entirely to industrial drone repair can realistically compete with, and beat, a large general tech publication when it comes to those specific LLM citations.

AI models are getting sharper at identifying that a focused, purpose-built source tends to be more accurate than a broad platform skimming across topics. For smaller publishers, specialization is no longer a limitation. It is an actual advantage.

3. Semantic Relevance

AI search engines do not measure relevance the way traditional keyword-based search does. There is no exact-match logic at play. The underlying question being asked is far more direct: Does this content genuinely answer what the user came looking for?

A tightly focused page built around a single question will frequently outperform a longer, multi-angle piece on the same topic. In the context of AI search visibility, depth of coverage consistently wins over breadth.

The most practical way to calibrate your content against this standard?

Look at which sources are being pulled into AI Overviews and other AI-generated answers for the queries you care about. Pay attention to how targeted those sources are. That is the benchmark you are working toward, and it is a more achievable one than it might look.

4. Clean Formatting

AI models process content differently from human readers. Rather than reading top to bottom, AI models look for exact sentences and discrete chunks they can extract cleanly and reference with confidence. An answer that only makes sense after several paragraphs of buildup is one that most AI models will pass over entirely.

Making your content extractable starts with structure. Keep paragraphs short and focused on a single point. Use question-based headings that mirror real search behavior. Write answers that hold up on their own without leaning on pronouns or context from other parts of the page. Dense blocks of text where the actual answer is buried in the middle are the fastest way to get ignored.

Microsoft’s guidance on structuring content for AI consumption reinforces this directly: the more cleanly a direct answer can be pulled from your page, the better your odds of showing up in AI-generated answers.

Not sure how your content is performing across AI platforms right now? Track My Visibility’s free mini report gives you a quick snapshot of where your brand is being cited, which platforms are picking you up, and where you’re getting skipped entirely.

10 Proven Strategies to Get More AI References

Understanding what AI search tools prioritize when selecting content is only half the equation. The other half is knowing which strategies actually move the needle when it comes to earning more AI references for your brand. These 8 approaches are the most effective place to start.

1. Start by Learning What’s Already Getting Cited

Before building anything new, spend time understanding what’s already earning LLM citations in your industry. Pull up ChatGPT, Perplexity, Claude, Gemini, and Google AI Overviews, then run the exact queries your target audience is searching for.

Study what shows up.

- Which sources get cited repeatedly?

- Across what types of questions? How often?

- Manual testing requires effort, but nothing gives you a more honest picture of what’s actually performing in AI search results.

This problem catches more online stores off guard than you’d expect.

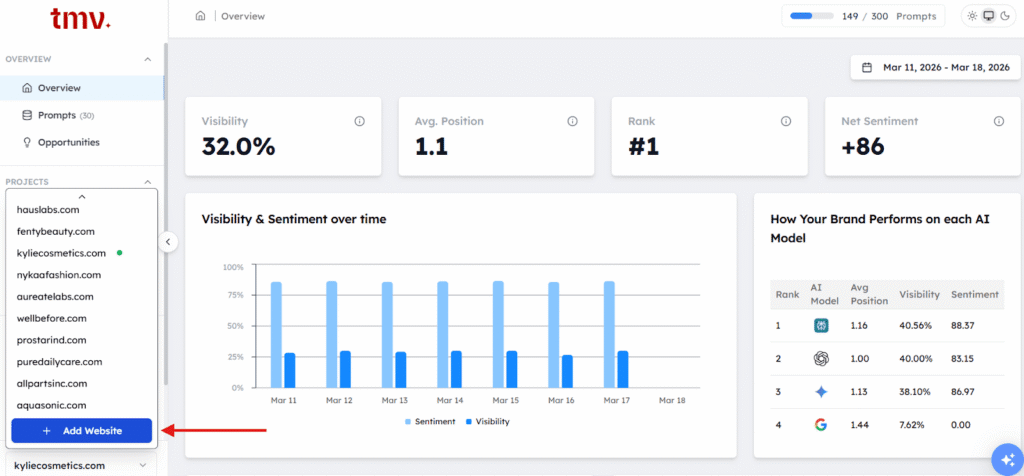

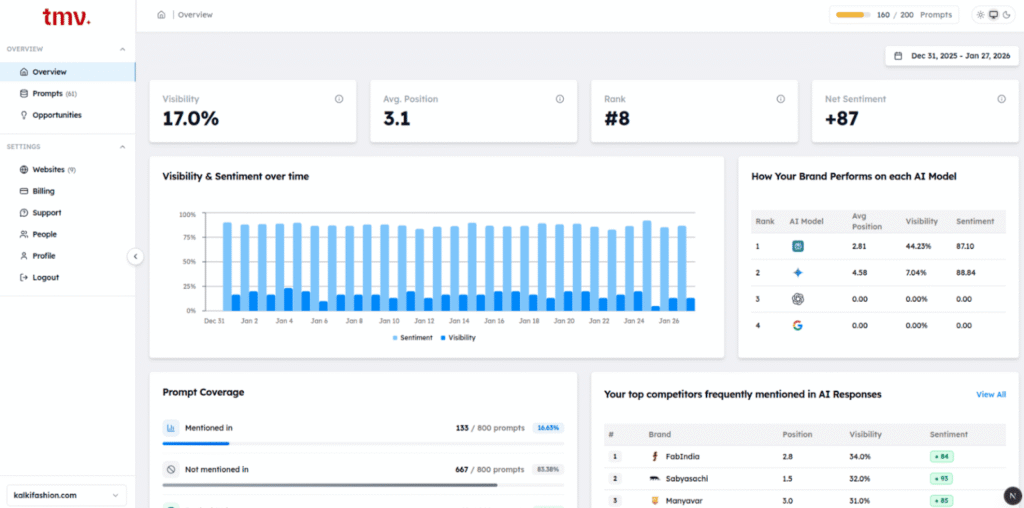

For running this kind of audit at scale, Track My Visibility is worth a look. It’s purpose-built to track how your brand and competitors appear across multiple AI platforms.

![]()

Focus on three things: which competitors are showing up most reliably as cited sources, what format their content takes when it gets pulled into AI-generated answers, and whether there are query types where no source is being cited at all. That third point is the most valuable signal. It means the AI has a gap it’s actively trying to fill, and well-positioned content can step right into that space.

Completing this audit gives you your baseline. Every time you create content or optimize existing pages from this point forward should be evaluated against what the audit revealed.

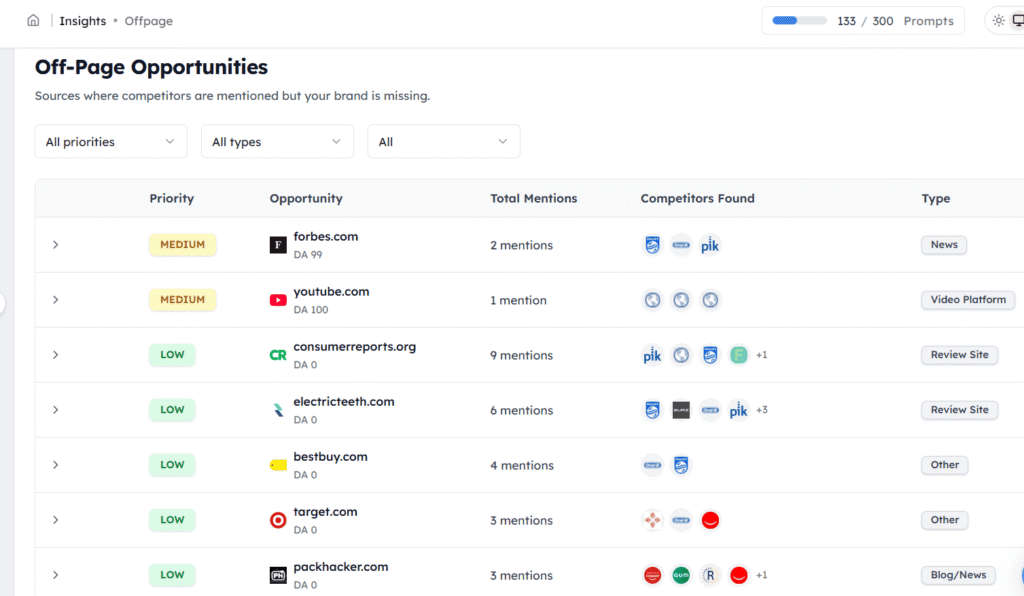

2. Identify Where Competitors Are Winning, and You Aren’t

A citation gap is where a competitor earns mentions from AI tools on a given topic, and your brand doesn’t. These are worth prioritizing because the groundwork is already laid. AI systems are actively pulling sources for those queries. Your task is to get in front of them.

Run competitor URLs through the Track My Visibility tool as a starting point. Then dig into your own data. Support tickets and sales call transcripts are good places to surface the questions your audience keeps asking.

Reddit threads, niche forums, and Q&A platforms often reveal questions that still lack a solid, trustworthy answer. Search autocomplete and “People Also Ask” results help round out the picture of what people are genuinely looking for.

Once you find a gap, the goal isn’t to mirror what’s already being cited. Write a more complete, cleaner, self-contained answer than what exists. That’s what earns placement.

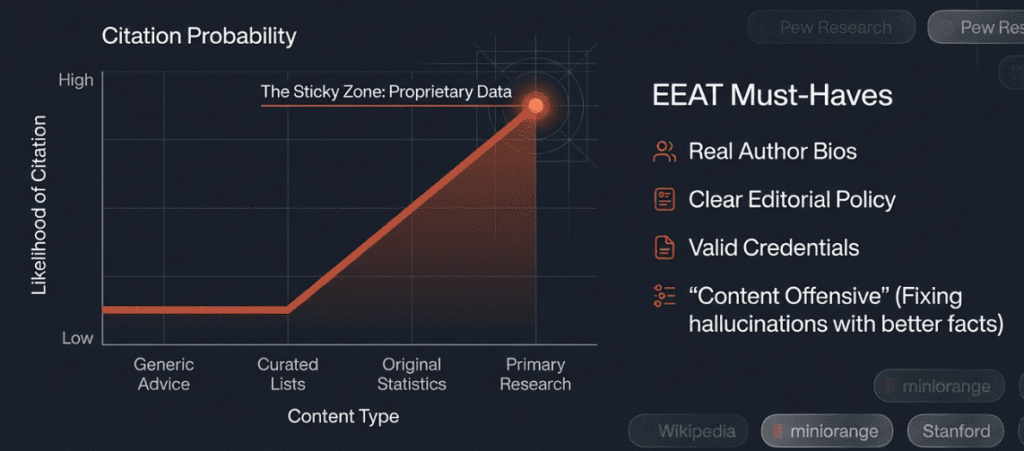

3. Use Your Own Data as a Citation Magnet

Original data is one of the most consistent ways to get cited by LLMs. When a model handles a question involving statistics or specific figures, it looks for traceable sources.

That’s the core reason it works. A vague claim gets ignored. A specific, sourced number gets referenced, and your brand becomes the primary source AI models point back to.

You don’t need a research team to do this. A survey of 50 to 100 respondents is enough, provided the methodology is stated clearly. Internal usage patterns, aggregated customer insights, and benchmarks produced by your own tools all count.

How you present the data matters. Put the number first. Note its source briefly. Keep the surrounding context lean so the stat can stand independently. If a key finding only appears in a table, write it out as a sentence as well. AI systems pull from plain text far more consistently than from table cells.

4. Build the Trust Signals AI Models Actually Look For

EEAT, which covers Experience, Expertise, Authoritativeness, and Trustworthiness, has moved well beyond its roots as a Google ranking consideration. AI models now actively apply these same criteria when deciding whether your content deserves a citation.

The signals aren’t complicated. Author bios with verifiable credentials, clearly stated editorial policies, named contributors on research pieces, and backlinks from reputable sources. All function as authority signals that communicate to AI systems that someone with genuine knowledge produced this content.

Content that could have come from anywhere tends to get treated as coming from nowhere in particular. Add a name, credentials, and a clear trail of accountability to change that.

5. Keep Your Content Fresh Enough to Stay Cited

Keeping content current is an ongoing process, not a box you check once. It needs to be built into how content teams plan and prioritize work from the start, not treated as a periodic scramble.

The right review frequency depends on content type. Data-heavy pages and statistics warrant a look every three to six months, or earlier when the underlying numbers shift. Evergreen how-to content can typically run about a year before needing a review.

Product comparisons and tool roundups go stale faster and benefit from a quarterly check. Trend and industry pieces should be revisited whenever the landscape meaningfully shifts.

A small habit worth building: display the “last updated” date near the top of the page whenever revisions are made. It serves as a straightforward trust signal for both AI search systems and organic search results. Keep it prominent rather than tucking it away in the footer.

6. Make Your Content Easy for AI to Pull From

This is probably the single most effective change you can make right now.

Answer capsules are concise, self-contained responses placed immediately after a question-style heading. For a more thorough walkthrough, our guide on optimizing content for AI answers covers the complete method.

Here’s how it looks when applied:

Heading: How do LLM citations work?

Answer capsule: LLM citations occur when AI tools fetch content from the live web through RAG and then cite that source directly within their response.

From there, you expand with supporting context and detail. One rule worth remembering: keep hyperlinks out of the capsule entirely.

Put your links in the body content that follows the capsule, never inside it. Lead with the answer every time. Neither the reader nor the AI should have to work through background information before reaching the point.

7. Get Cited Beyond Your Own Domain

Your website alone will not get you cited across every AI platform. Perplexity, for instance, draws heavily from user-generated content on Reddit, Quora, and LinkedIn. That means your AI search visibility strategy needs to operate off your domain as well.

Get involved in Reddit discussions, publish long-form content on LinkedIn, respond to questions on Quora, and gradually build relationships with journalists.

Once you are doing this with any consistency, tracking what is actually moving the needle becomes important. A tool like Track My Visibility makes this easier. It shows which platforms are picking up your content and where AI tools are already referencing you, so you can put your energy into what is gaining traction rather than guessing blindly.

When several credible platforms independently point back to the same information from you, AI visibility tools are far more likely to treat you as the source.

8. Technical Side of Earning LLM Citations

Technical optimization for AI search shares a lot of ground with traditional SEO, but citations have a few specific requirements worth paying attention to.

Schema markup, including article, FAQ, organization, and product types, helps AI systems interpret your content correctly. The FAQ schema tends to perform particularly well because it aligns with the question-and-answer format that AI models already work in. As a best practice, run your pages through Google’s Rich Results Test periodically, since broken markup can quietly reduce your citation rate without any obvious warning signs.

LLMs.txt is another element worth implementing. It functions like robots.txt, but is designed for AI crawlers rather than traditional search engines. Widespread adoption isn’t there yet, but setting it up early puts you ahead of the curve.

For local ecommerce brands, NAP consistency, meaning your Name, Address, and Phone number staying uniform across every platform, is non-negotiable. Page speed is also more consequential than most people assume. A slow-loading site can cause AI systems to time out before they ever retrieve your answer.

Track My Visibility gives you a concrete view of your current standing. You can identify which AI models are citing your brand, which prompts competitors are capturing that you’re missing, and where gaps exist in your citation quality. That visibility data removes the guesswork and tells you precisely where to direct your technical and content work.

9. Build Topical Authority Through Content Clusters

Covering a topic once isn’t enough. AI models pay attention to whether your site consistently answers questions within the same subject area, and a single page rarely signals that kind of authority.

A content cluster pairs one strong pillar page with several supporting articles, each going deep on a specific subtopic, all linking back to each other. Over time, this tells AI models that your domain is a reliable home for that subject, not just a one-off resource.

For example, a skincare brand with a pillar page on “routines for oily skin” backed by dedicated pages on cleansers, SPF, and overnight treatments will get cited far more consistently than a brand with one long page trying to cover all of it at once.

10. Earn Citations Through Digital PR and Journalist Outreach

When a credible publication references your brand or data, AI models treat that as a trust signal. It tells them that real editorial judgment stood behind your content, which carries more weight than anything you can signal from your own domain alone.

You don’t need a PR agency to make this work. Find journalists who regularly cover your niche and pitch them something genuinely useful, ideally original data from your own research. A single well-placed article in a trusted outlet can drive citations across AI platforms that your own pages would never earn on their own.

How Each AI Search Tool Selects Its Sources

The universal strategies covered earlier work across all platforms. That said, each AI system has its own preferences when it comes to picking sources.

| Platform | What it prefers | How to win visibility | Best citation sources | Best content format |

| ChatGPT (OpenAI) | High-authority sites and pages rank higher in search | Build authority and earn major media coverage | Editorial media, industry publications | In-depth guides, data-backed explainers |

| Perplexity (Perplexity AI) | Community content from Reddit, Quora, and LinkedIn | Prioritize off-site and community content | Reddit, Quora, LinkedIn | Q&A posts, expert opinions |

| Google AI Overviews (Google) | Your own high-ranking indexed pages | Improve SEO, EEAT, schema, and page quality | First-party site content | Structured evergreen pages |

| Claude (Anthropic) | Well-sourced, transparent reasoning | Publish citation-ready, sourced content | Research articles, documentation | Methodical explainers |

| Gemini (Google) | Expert, well-cited content | Create precise reference content | First-party experts, trusted sources | Technical deep dives |

Why LLM Citations Matter for eCommerce Brands

The case for Large Language Model citations becomes clear once you look at what the data actually shows.

Adobe’s 2025 holiday season report documented a 693% year-over-year surge in retail AI-driven traffic, with travel, financial services, and tech sectors following close behind. But the more telling detail is what happens after that traffic arrives.

A Reddit user shared that after optimizing for AI visibility, AI became their third-largest traffic source and noted the traffic felt more qualified than traditional SEO traffic.

Visitors coming through AI answers convert at rates well above average, sometimes several times higher in certain verticals. By the time someone reaches your site through an AI citation, the AI has already done part of the selling. They arrive with a baseline of trust already established.

For e-commerce brands, the timing still works in your favor. The majority of eCommerce brands haven’t started building citation authority yet, which means the window to get ahead of the competition in AI search visibility is open. E-commerce brands that move now will be much harder to push out once this space gets crowded.

It’s also worth being clear-eyed about what citations actually do.

Rand Fishkin said, “Citations are correlated, but not causal with brand appearances in the results.”

They’re a meaningful signal, not a guaranteed outcome. The real objective is a sustained, consistent presence across AI platforms, not accumulating citation counts for their own sake.

There’s also a natural overlap with conventional SEO worth paying attention to. The same factors that increase your chances of being cited in generative engine optimization (GEO), including original research, strong EEAT signals, clean formatting, and topical authority, are the same ones that improve rankings in traditional search engines.

This is the foundation behind AEO and GEO, and understanding how they connect to SEO makes the overall strategy much easier to act on.

How to Track and Measure your AI Search Visibility the Right Way

If you are not tracking, then you will not be able to fix things. So, the practical starting point is knowing how to monitor your AI search visibility across the platforms your audience actually uses.

Method 1: Track AI Citations Without Spending a Penny

You can start it with a simple test. Just put together 20 to 30 specific questions that your customers commonly ask. Then you can run all the questions through AI search tools like ChatGPT, Perplexity, Claude, Google AI Overviews, and Gemini monthly.

Don’t just check if you appear on AI tools. It’s better to dig a little deeper, like direct links, unlinked mentions, where your competitors are showing up, and any ghost citations you come across.

Method 2: Using Tools and Analytics to Monitor AI Visibility

Inside GA4, traffic from AI search engines typically lands under direct traffic or carries referrer strings tied to specific platforms. Build segments to separate visits from known AI sources like perplexity.ai and chat.openai.com, then monitor those conversion rates on their own.

Attribution is rarely clean since a portion of AI traffic arrives with no referrer attached. Even so, partial visibility data tells you more than nothing, and it highlights which content is genuinely pulling its weight.

Platforms like Track My Visibility are purpose-built to track brand presence across major AI platforms. If AI search visibility matters to your business goals, committing to at least one dedicated tracking tool is a worthwhile call.

What to Do When AI Gets Your Brand Wrong

AI systems get things wrong. They surface outdated pricing, retired features, or old brand names, and there is no formal channel to correct a language model the way you would dispute a search result.

The most reliable approach is to go after the source. When a model keeps citing inaccurate information about your brand, find the page it is likely pulling from and fix it. Structure the correct details so they are easy to extract: use answer capsules, lead with the accurate fact, and include a visible “last updated” date.

AI models naturally gravitate toward content that is fresh and easy to parse. If your updated page is cleaner and more direct than the outdated one, the model will start favoring it over time. You are not trying to override the system. You are just making the correct answer easier to reach than the incorrect one.

Common Mistakes that are Killing your Brand’s AI Search Visibility

Good intentions only go so far if your approach is still built around outdated SEO thinking. Below are the most common ways e-commerce brands end up undermining their own AI visibility without realizing it.

1. Adding Multiple Hyperlinks inside Answer Capsules

It sounds like it should help, but it doesn’t. E-commerce brands often assume that a well-linked answer capsule signals authority. The problem is that AI models are less likely to pull the exact snippet from capsules that contain hyperlinks. A link muddies attribution, and the model will frequently pass over that capsule in favor of a cleaner one.

The solution is straightforward: move your links into the supporting body content below the capsule. The capsule itself should be clean and contain no links.

2. Writing the Answer at the End in the Content

If the first thing someone reads is two or three paragraphs of setup before you get to the actual point, you’re writing for an older version of how search works. AI systems and modern readers share the same expectation: lead with the answer. Context, nuance, and background all have a place, just not before the core response.

3. Using One Strategy Across Every AI Search Tool

Optimizing for Google AI Mode is a genuinely different task from optimizing for Perplexity. What earns citations on ChatGPT does not automatically translate to Claude or other AI search engines. Each platform has its own logic for selecting and surfacing sources.

If more than one platform drives real traffic for your brand, your strategy needs to treat each one on its own terms. A single blanket approach will leave gaps.

4. Ignoring Third-Party Platforms

A site-only content strategy leaves significant citation potential on the table for e-commerce stores. Platforms like Perplexity actively favor community-driven sources, and they will surface a relevant Reddit thread or LinkedIn post ahead of your official brand page if one exists.

Your brand presence needs to extend to wherever your audience is already searching for answers, and those answers need to be genuinely worth referencing.

5. Not Tracking Negative Mentions

AI visibility is not one-directional. If the overall sentiment about your brand across the web leans negative, AI models will reflect that to users, often without any signal to you that it’s happening. Monitoring your own site is not enough.

You need a broader view of how AI search engines are characterizing your brand across different sources. If a model keeps surfacing an old PR problem or a critical review, that source needs to be addressed directly rather than left to keep circulating.

Where AI Citation Opportunities Are Heading Next

The way AI surfaces products is still changing, and keeping up with where it’s heading puts your eCommerce brand ahead of most competitors. Here’s what’s already in motion:

- Multimodal search is reshaping citation opportunities. AI models now process videos, images, and voice queries, not just text. The multimodal AI market is growing at a CAGR of 32.7% through 2034 (Research and Markets). A text-only strategy will gradually cost you visibility across these formats.

- Personalization is making content surfacing harder to predict. AI responses are increasingly shaped by individual user context and search history. What still works regardless: content that is useful, credible, and easy to extract.

- Commerce features are moving into AI responses fast. Product carousels, price comparisons, and AI-driven recommendations are already part of how answers get delivered. 72% of consumers now expect AI-powered shopping assistants (BigCommerce). For eCommerce brands, this is where the real LLM citation opportunity sits right now.

Your 30-Day Plan to Start Earning LLM Citations

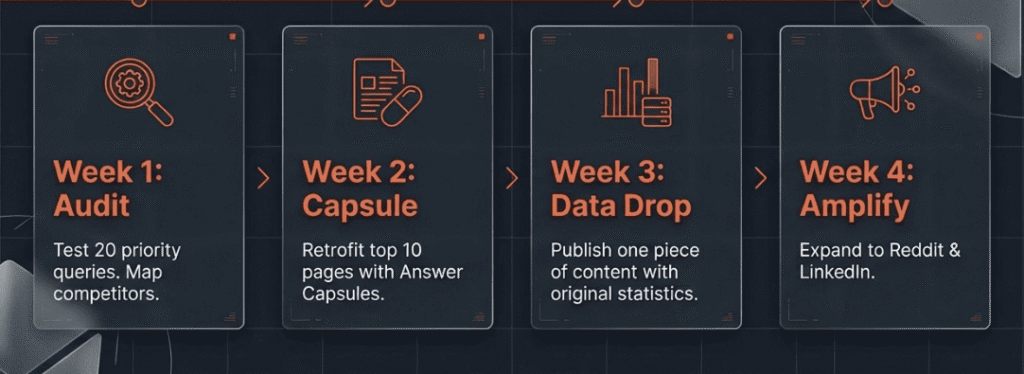

Week 1: Start with a manual audit. Query 20-30 of your target topics across ChatGPT, Perplexity, Claude, Gemini, and Google AI Overviews. Track every result in a spreadsheet. Using a structured checklist for AEO and GEO audits makes it easier to know what signals to look for and how to record them consistently.

Week 2: Add answer capsules to your 10 highest-priority pages, since these are the most likely to become cited pages. Rewrite your headings as direct questions, place concise self-contained answers immediately below each one, and push any supporting links into the body text further down.

Week 3: Publish or substantially revise five pieces of content built around original data or first-party insights. Open with the key stat, briefly explain how you gathered it, and format it so it’s easy for AI models to pull out and cite.

Week 4: Build a monitoring routine. At a minimum, set a monthly manual testing schedule to track your AI search visibility. If budget allows, bring in a dedicated AI visibility tracking tool to complement that process.

Months 2-3: Expand your off-site footprint. Develop an active presence on Reddit and LinkedIn, pinpoint where your brand mentions are missing in AI-generated answers, and start building journalist relationships to earn organic coverage over time.

Quick Summary

LLM citations are quickly emerging as one of the most valuable AI visibility metrics for e-commerce brands that depend on search traffic. The reassuring part is that the signals behind them, including content freshness, domain authority, clean formatting, and original extractable content, are factors you can directly influence.

E-commerce brands that take action now are getting ahead of most competitors, who haven’t touched this yet. That gap won’t last.

Start with the Week 1 audit to get a clear picture of where you currently stand. If you’d prefer to skip the manual work and track your AI search visibility across platforms from one place, Track My Visibility does exactly that. It monitors how consistently your brand gets cited across AI tools like ChatGPT, Perplexity, and Google AI Overviews, so there are no blind spots.

Wherever you begin, begin. Build from there.

FAQs

Does publishing more content increase LLM citations?

Not really. Volume alone won’t move the needle. A single well-structured, original, and credible page will get cited far more consistently than ten thin articles covering the same topic. AI models care about answer quality and extractability, not how much you’ve published.

Why is my competitor getting cited but not me?

This usually comes down to formatting, not quality. Your competitor’s page is probably easier for AI to extract a clean answer from. Check whether their content leads with a direct answer, uses question-based headings, and keeps paragraphs short. Those structural signals often matter more than content depth alone.

How long before I start appearing in LLM citations?

Most brands see movement within four to eight weeks of making structural changes. Perplexity tends to pick up fresh content faster than ChatGPT, which leans on more established sources. Starting with your highest-traffic pages gives you the quickest feedback loop.

Can a small eCommerce brand compete with large publishers?

Yes, and this is one of the more encouraging things about AI search. A focused niche store with genuine topical authority can consistently outrank a large general publication for specific queries. AI models increasingly reward depth of expertise over domain size.

Do LLM citations directly impact sales?

The connection is real but not always immediate. Visitors arriving through AI citations tend to convert at higher rates because the AI has already vouched for your brand before they click. Think of it as a high-trust referral that compounds over time as AI search continues to grow.

Resources:

- Similarweb’s 3rd Annual Global Ecommerce Report: Growth Shifts to Apps and AI

- Generative AI-Powered Shopping Rises with Traffic to U.S. Retail Sites

- When Facts Change: Probing LLMs on Evolving Knowledge with evolveQA

- AI traffic surges across industries, retail sees biggest gains | Adobe UK

- Transform Ecommerce with AI Shopping Assistants (2026)

- Research and Markets Report

- Randfishkin_5minutewhiteboard-activity